Daily Image

13-09-2019Precision requirements for Epoch of Reionization imaging

| Submitter: | The LOFAR EoR team |

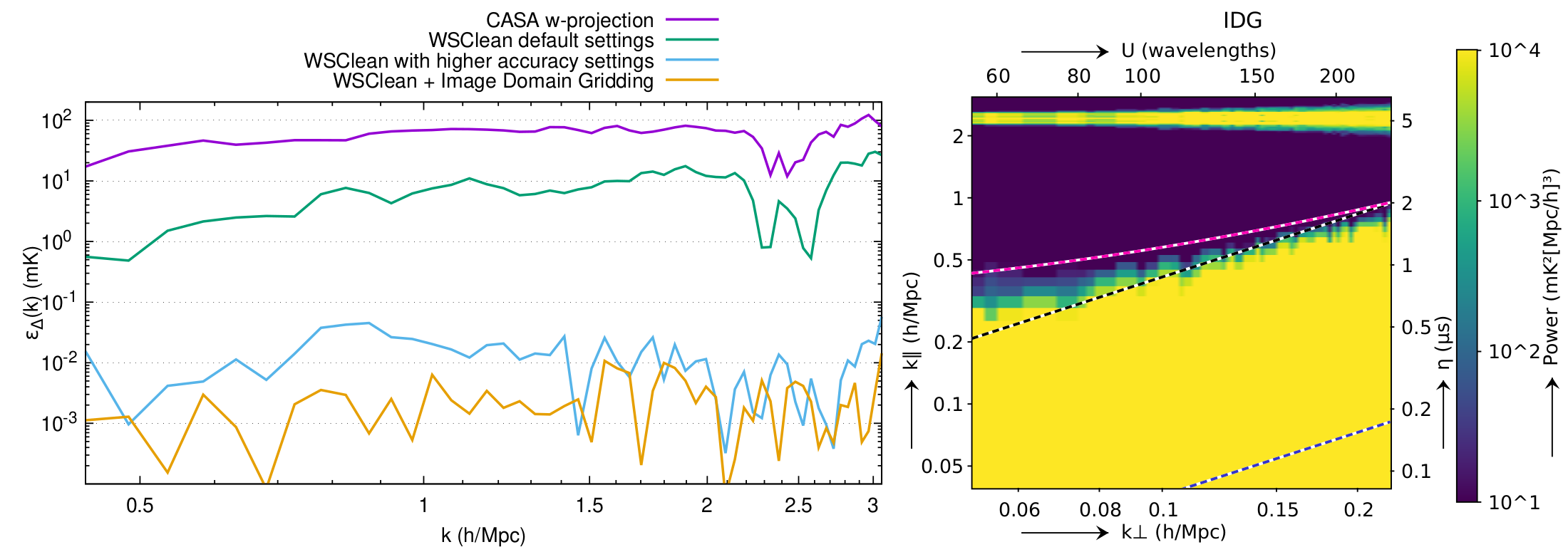

| Description: | The LOFAR Epoch of Reionization project is again a bit closer in understanding how our Universe evolved after the first stars were born. Detecting signals from 13 billion years ago using LOFAR requires extremely high processing accuracy. In a new publication, the team has looked at the imaging step that transforms calibrated visibilities into a map of the sky. The team found that one particular simplication made by most imaging software (the discretization of the gridding kernel) is funest for the accuracy. Imagers that are based on w-projection or facetting cannot reach the required accuracy because of this. WSClean and IDG, two imaging algorithms developed by ASTRON employers, both achieve sufficient accuracy for detecting the weak cosmological signals. Among the tested algorithms, IDG turned out to be the most accurate and, because IDG can make efficient use of GPUs, it is also the fastest. Understanding the processing requirements is important for LOFAR, but even more so for the SKA low, for which EoR is a major science case. The left plot in the image shows the error caused by imaging with different algorithms or settings, as a function of the scale of the signal k. The cosmological signal is expected to be a a few millikelvins at low k-values, and the imaging error should therefore be well below this. The right plot shows the circularly-averaged 21-cm power spectrum produced by IDG from simulated data. Article: Precision requirements for interferometric gridding in 21-cm power spectrum analysis |

| Copyright: | CC-by-SA |

| Tweet |  |