Daily Image

13-05-2019Eradicate data loss of the APERTIF Data Writer for Imaging

| Submitter: | Marcel Loose |

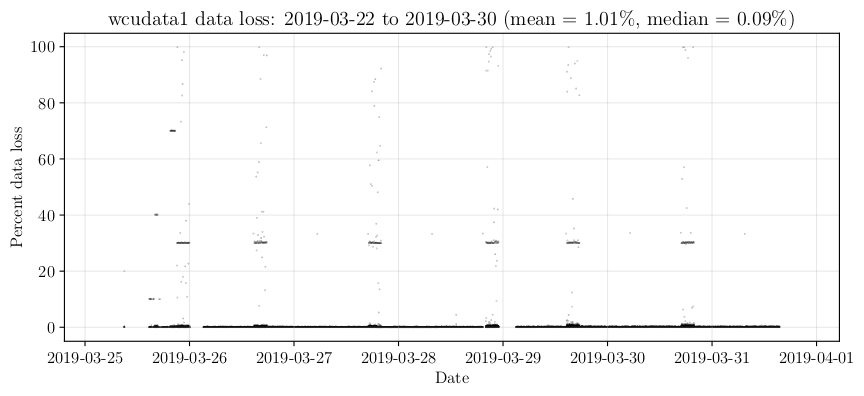

| Description: | The Data Writer is a crucial part of the APERTIF system when running in imaging mode. It is responsible for receiving the correlated UV data from the UniBoards, flagging and further integrating the data, and writing up to 40 different Measurement Sets to disk, each containing the UV data of a single compound beam. During commissioning, we noticed significant data loss in the internal buffers of the Data Writer when it was writing data to disk. In 8-bit mode (200 MHz), we were losing 2-3% of the data on average. In 6-bit mode (300 MHz), this loss peaked to a whopping 20%. Completely unacceptable. Further analysis of the problem showed that data loss started to occur once the Linux disk cache had consumed all free RAM memory. At that moment there was a sudden increase in CPU load, probably caused by the Linux kernel trying very hard to free up cache memory. Since we had limited time to adapt the software we tried the sledgehammer approach: force the Linux kernel to drop its cache every minute, well before it would be full. Hesitant at first, because many Linux experts considered this bad practice, we tried it on some short 5-10 minute observations. It was startling to see that the data loss dropped to almost 0%. Surprisingly, other processes that read data from the disk, like the data ingest to ALTA (APERTIF Long Term Archive), did not suffer from this. The plot shows the data loss at the Data Writer during the second week of the APERTIF Science Verification Campaign, where we flushed the disk cache every minute. The few peaks in loss are caused by a small bug in the calculation of the data loss at the end of an observation. These peaks strongly affect the mean values; therefore, it is better to look at the median. The data loss is now approximately 0.1%, which is acceptable. Thanks to John Romein for helping to solve this issue and Vanessa Moss for providing the plot. |

| Copyright: | ASTRON & Marcel Loose |

| Tweet |  |